Over time, Google has increasingly shown preference for sites that provide users with a good experience, whether they’re on desktop or mobile. Making sure your site is responsive across a variety of devices and screen sizes is an important part of that, and can even play a role in your site’s overall conversion rate. We’ve helped a handful of clients ensure that their mobile and responsive sites are ready to provide customers with an enjoyable and easy experience as they shop online.

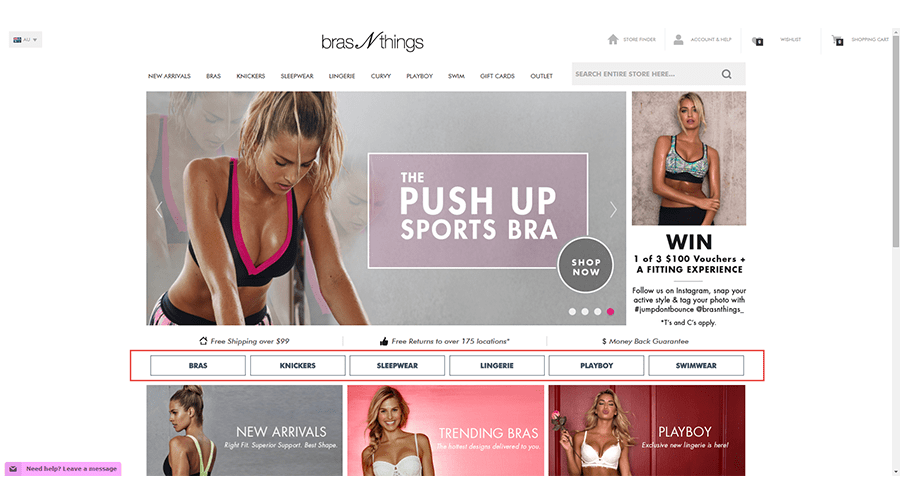

The Client: Bras N Things

Bras N Things have been Australia’s Bra fitting specialists for more than 25 years.

Inflow’s CRO team was asked to optimize BrasNthings.com during the launch of a new responsive site to help identify any post-launch issues.

As this was one of the earlier responsive eCommerce site redesigns done, there weren’t any real best practices to follow.

In addition to being responsive, the redesign was also introducing a new brand experience, as well as new core navigational elements.

The Inflow CRO Solution

Focus on your user

This is the most important piece of conversion optimization – if you don’t provide what your user wants, it doesn’t matter the quality of your product or how nice your site looks. There are a number of tools out there to help you better understand your users, including surveys, screen captures and website analytics. The tools just mentioned helped us optimize the homepage and category pages to help users more easily find products and prevent “pogo-sticking” between areas of the site.

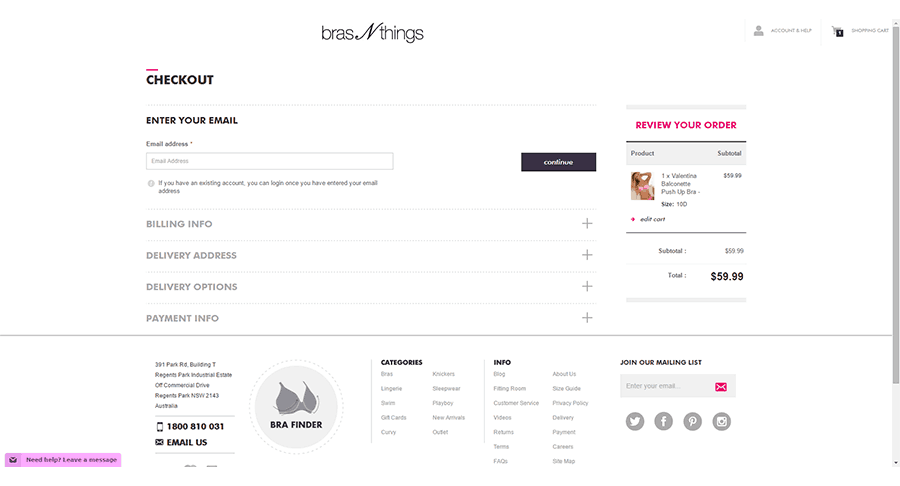

Remove distractions from your preferred path

This is most commonly applied in checkout flows, but for some sites, there may be other flows, such as custom builders that require similar treatment. You can see there are very few places to exit the checkout at this point.

Prioritize your work based on traffic

Analytics plays an important role in determining where to focus. If you see your paid search drives a lot of traffic that doesn’t convert, it’s possible you aren’t doing enough to gain their trust. If the majority of your purchases come through email, maybe the focus should be building that list larger and streamlining the flow for those users.

Similarly, the device type is becoming a very important piece of the puzzle. For most retailers, mobile has overtaken desktop in traffic; however, conversion rates and revenue still trail significantly.

With Bras N Things, the second half of 2015 focused largely on improvements we could make to the mobile experience to help users find products, which seemed to be the largest drop off point.

Validate all changes

Regardless of how great an idea may seem, there’s always a chance it doesn’t pan out. Even CRO experts don’t get it right 100 percent of the time – our success rate is somewhere around 80 percent. To help mitigate this risk, we recommend validating all changes before making them permanent. The most reliable way is through A/B testing, however, there are many other methods you can use. Even if you have done A/B testing, we recommend reviewing the data again after the release.

This applies not only to changes you’d like to make to your own pages, but also to vendors you might use. Recently with Bras N Things, we determined that one of the checkout vendors was not driving purchases, but resulted in significantly more merchandise returns. Since it was not driving incremental revenue, it was clear that we needed to remove it.

The Result?

Testing over the course of three years resulted in a conversion rate increase of 90 percent. The 90 percent conversion rate increase is significant because it takes into account how much each test actually impacted the bottom line with a conservative estimate. There are two factors at play here:

1) A test on the checkout page likely impacts 100 percent of your eCommerce transactions, but not every user who makes a purchase sees the homepage. As such when determining the impact of each test, we factor in the eCommerce Participation Rate for the test page. If only 50 percent of your traffic sees our homepage test, then the impact to the bottom line is cut in half from what we measured.

2) We always report conservative lift figures – not measured lift. What does this mean? When we test, we’re taking a sample of the total population, which means it may not be 100 percent representative of your users. Based on what’s measured, we determine a range within which we expect the conversion rate to fall – the 95 percent confidence interval – then report the low end of that range. This is a statistically sound method for determining the minimum impact we can expect to have.

Bottom Line

- 90% increase in overall conversion rate from 17 winning tests

- Prevented losses by identifying poor performing concepts

- Focus on mobile drove 4x mobile revenue increase

- Overall, 60% Revenue Increase

Is your eCommerce website setup to provide a good experience for visitors? Is a poor experience causing you to lose customers? Use the form below to contact our team of conversion optimization experts!

0 Comments