Ideally a blog post should be written and formatted for the web, meaning it should be short, snappy and image-rich. This post is exactly the opposite of that. Do as I say, not as I do? After beating myself up about it I concluded that the message I wanted to convey (i.e. Holy CRAP we need to know a lot of stuff these days!) is best “illustrated” as a wall of text.

One of the exciting projects we’re working on here at seOverflow is to update our site audit and ongoing SEO task lists. Since the attrition rate of old tasks isn’t anywhere near the growth rate of new tasks our new audit task list has tripled in length from the one I had a few years ago.

One of the exciting projects we’re working on here at seOverflow is to update our site audit and ongoing SEO task lists. Since the attrition rate of old tasks isn’t anywhere near the growth rate of new tasks our new audit task list has tripled in length from the one I had a few years ago.

Although a few things have dropped off the agenda (e.g. keyword meta tags) we’re still working on things like title tags, meta descriptions, robots.txt, robots meta, header tags, alt tags attributes and the myriad “old-school” on-page factors that have been part of our lexicon for many years.

In-fact, I can think of very few things that I used to do as part of an audit or ongoing SEO that I no longer have to do. But I can think of dozens of things that have been added, and most of them are more complex than their predecessors.

I’d like to outline a few of these things and then discuss what we can do about them. But first, let’s have a look at some of those predecessors.

What Doesn’t Work Anymore

Lots of things don’t “work” anymore, or at least not as well as they used to, or not without so much risk as to render them undesirable. This includes keyword stuffing content, site-wide footer text, sponsoring WordPress themes, embedding links in widgets, automated link exchanges, massive article spinning, bait-n-switch 301 redirecting, domain buying for the purpose of redirecting, automated comment spam, mass directory submissions and most paid link networks.

If you have one or two out of date SEO tactics in your foundation it is unlikely to cause your site to come tumbling down as long as you’ve worked hard on other areas. A few directory links and a site-wide footer probably aren’t going to kill your rankings if you’ve spent the last couple of years building up a good reputation in the industry by providing outstanding service or products, and useful content.

But old SEO tactics that rely on outsmarting the algorithm are are being eroded one update at a time. If you are left with a foundation built upon templated content with the name of a city changed, or thousands of stub pages waiting to be filled with user-generated content that you think will magically appear someday – you may be in for a fall. Frankly, I’d be surprised if you haven’t already had one.

What Has Been Added to the SEO Task List Recently

If you don’t have a cup of coffee you should go grab one. We’re going to cover a LOT of ground here. Unfortunately, this means that we have to sacrifice a little depth to gain the breadth. This post isn’t meant to be a tutorial on every aspect of SEO, but rather a way of putting into perspective the enormous, overwhelming, mind-boggling amount of STUFF on an SEO’s plate these days. Executives and Developers need to know this. SEOs need to know this. And most important of all, any moron out there saying “SEO is dead” needs to know this. SEO is like a hydra. Every time one task becomes obsolete, several more have taken its place. Here are some of the examples that I’ve come up with, though I’m sure there are many more I’ve left off the list (feel free to add them in the comments section).

Site Performance / Page Load Time

If you don’t think high latency is worth fixing you might want to read this page, specifically this part: “…that’s why we’ve decided to take site speed into account in our search rankings.” How many other times have you actually heard Google categorically say that something is a ranking factor? One could argue that they chose this particular one to make public because it is within their best interest to have SEOs speeding up the web for their crawlers, which saves Google money. However, that doesn’t change the fact that site speed, however small, is a ranking factor. The percentage of sites affected by this factor are probably still in the single digits, but it is worth your time to make sure your sites aren’t among them. Add site speed to the task list and use the tools below to diagnose and fix problems.

- Google PageSpeed Tool (web based)

- Google PageSpeed (plugins)

- Google mod_pagespeed (Apache module)

- Google Webmaster Tools Site Performance (report)

- YSlow by Yahoo (plugin or command line)

- WebPageTest.org (visual graph of load times by element)

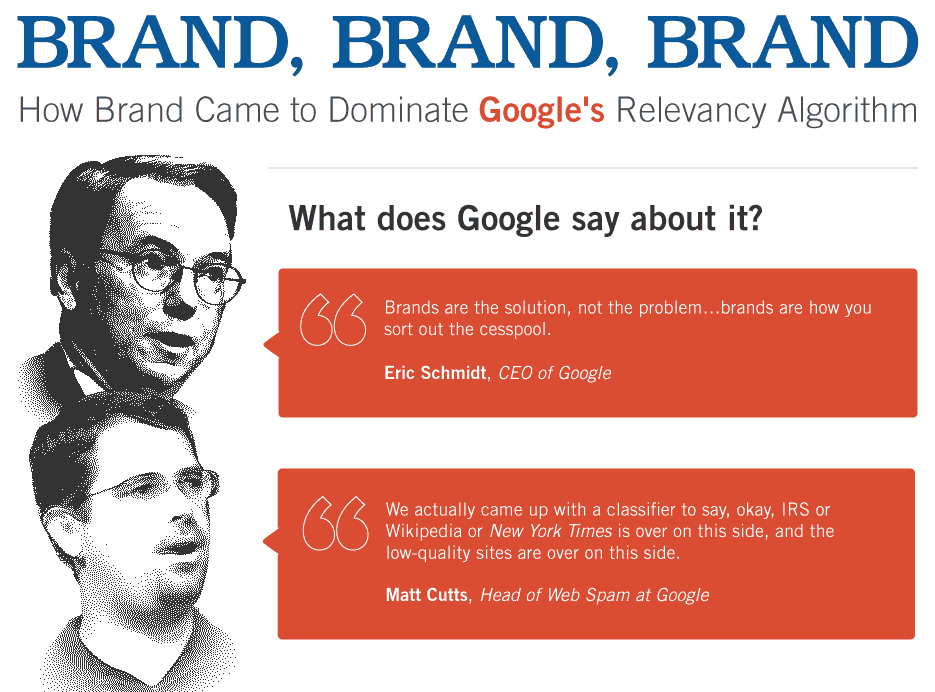

Brand Building / PR

As my grandmother would say, Goodness gracious, this is probably at the heart of how our jobs have changed over the last few years. Call it growing up, selling out, or just simply losing the battle – but the SEO of today may be just another marketer.

I’ve always disliked marketing because I tend to dislike being told I should want things that I don’t need and can’t afford (or at least shouldn’t be wasting my money on). I mute commercials. I roll my eyes at magazine and newspaper ads. Sometimes I’ll even get downright angry because I feel like marketers insult my intelligence by putting things like “Natural Essential Oil Shampoo” on a product so full of carcinogens I’m surprised they’re even legally allowed to sell it, much less use a word like “natural.”

So have we joined the dark side? Are we just marketers? I like to think we’re not. The first time I ever heard Danny Sullivan speak was at an SES in Chicago and the point of the keynote was about how search is different from other forms of marketing because the audience specifically asks to see whatever it is that you have to offer. They ASK by searching for something, which is entirely different than being bombarded with commercials and glossy ads that you have no desire to see whatsoever. And for this reason I embrace our newfound responsibilities as marketers and brand builders.

What are our tasks related to brand building and PR? First of all, if you think “PR” is writing a press release about how you launched a new page on your site so you can send it out to the nobody-cares list on PR Web or PR Newswire you are on the wrong path. These days SEOs need to either specialize in and understand PR from the traditional standpoint as taught in universities and PR firms around the world, or work with people who do specialize in that area. Frankly, if you have a good PR team to work with you don’t need to hire a link builder. Other tasks include everything from major brand awareness campaigns and choosing branded domains over exact-match domains (EMDs), to simply recognizing when and why to put the brand at the front of a title tag instead of the end.

Search used to be where the playing field was level and mom-n-pop shops could get in front of a huge audience just by being a little bit more agile than the big brands that dominate the landscape off of every major interstate highway exit across the country. Though I find it heartbreaking to admit this, those days are over. You need to build a brand. This is the single most important and single most difficult task that has been added to the SEO agenda over the last few years. And I wish I was better at it. It helps to have resources like the ones below:

- Potential Brand Signals (by Aaron Wall)

- The Next Generation of Ranking Signals (by Rand Fishken)

- Google Brand Promotion: Why Brands Rank #1 (by SEOBook. Source of graphic above)

- How Brands Became Hardwired in Google SERPs (by Aaron Wall of SEOBook)

- Branding & The Cycle (by Aaron Wall. Can you tell I’m a fan yet?)

- The Public Relations Student Society of America (Awesome bulleted list of resources on that page)

- CisionPoint (Enterprise level public relations software)

- VOCUS (Cloud-based marketing and PR software)

A Little Secret: Before coming over to seOverflow I spent a year as the SEO Director Overpaid PR & Marketing Apprentice for a guy named Luke Knowles. You’ve probably never heard of him, but he started several “super affiliate” sites, including Free Shipping Day, which was bigger than Black Friday (according to comScore) and was responsible for about $942 million in online revenue last year. In 2012 it will probably top $1 billion.

Here’s the secret: I didn’t have to do any link building for his sites. Instead, I worked with a small in-house PR team to pitch article and TV segment ideas to major news outlets. We used CisionPoint to discover and manage these media relationships. Instead of building links directly into the sites I worked on outreach to make sure articles featuring our websites and TV news segments featuring our in-house talking heads PR experts received as much attention as possible. Part of this had to do with interlinking the stories so they could help prop each other up long enough for viral action to take place. I won’t go into the details of how all of this was done, or what it takes to get someone from your company, or an article about your company, on CNN, ABC, Fox, NBC, NPR, USA Today, New York Times (all of which we did), but let’s just say if I had to choose between your average link builder and an expert PR professional who knew how to approach and interact with media outlets and presented well on camera, I’d go for the public relations person any day of the week.

So Why is Everyone Still Link Building? A: Link building is easier. B: Link building is cheaper. C: Not every business is sexy/interesting/new enough to warrant mainstream media coverage. D: Link building still works (our team rocks at it).

What if I Have a Boring Client? You can overcome a boring client, but not one without a decent budget. The story doesn’t have to be, and in fact shouldn’t be, about your company or client. It should be about a topic that is already in the news. Nobody wants to hear your personal injury client talk about his latest slip-and-fall case, but you can bet there is a TV news station or online newspaper out there right now looking for a “legal expert” to talk about any number of high-profile legal cases going on at the moment. The trick is to develop your client as a “legal expert” worthy of being interviewed on the topic. This takes a commitment on their part. You have to start small, develop momentum, and be pro-active in contacting the media. The more interviews they get, the more – and higher-profile – outlets they can pitch. All of this is easier said than done.

PR and Brand Building is Hard! There’s no doubt about that. And, unfortunately, the cost is way beyond the typical monthly link building budget for most SEO clients. Entire SEO business models are currently shifting around this area. Smarter people than me have already figured out how to make the leap without overpricing their services and alienating small business clients. A theme I keep returning to in this post is one of specialization. To be successful at this kind of public relations and brand building you need to know it forwards and backwards. If you want to be the next SEO-centric Public Relations expert I think your future will be bright, but you’ll have to start specializing yesterday now.

Http Header Status Codes

Even status code best practices are changing! The jury is still out on some of this, but a lot of people recommend the 410 over the 404 because it is faster and, technically, a more accurate code for web documents that have been removed from the domain. John Mueller from Google has recently verified this when he clarified his statements from a Google Webmaster Help thread in a response to Barry Schwartz:

We do treat 410s slightly differently than 404s. …If you want to speed up the removal (and don’t want to use a noindex meta tag or the urgent URL removal tools), then a 410 might have a small time-advantage over a 404.

SEOs also need to keep their eyes out for soft 404s, though this has been an issue for many years now. The good news here is that Google doesn’t seem to be denying your XML sitemap just because you have soft 404s like they did a few years ago.

Content Quality

Content has always been important, and presumably “quality” has always been a major factor. However, with a few decent links hard-won by linkbuilding efforts and a decent, trusted domain – even crap content could rank well. A lot of SEOs figured it wasn’t their problem to deal with. Their job was to get a site to rank well, which meant that content had to be written for search engines.

Multiple algorithm updates have blasted such sites out of the index, but Panda was a particularly effective hatchet in this area. Once upon a time you could have thousands of stub pages (e.g. empty listing pages for businesses, coupons or products); templated content with geo-modified search & replace keywords (e.g. directories and IYPs); useless content designed to bring in specific searches; spun content; duplicate content… and the worst thing that could happen is that those pages would be put into the supplemental index and wouldn’t rank very well. The rest of your site would be fine. Internet Yellow Page (IYP) type sites, and vertical directories loved this. It meant they could put up a generic page for every single city in the country, fill it with templated content that replaced one city name with another, and once someone signed up to be listed on that page it would move from the supplemental to the main index and start ranking. Content mills loved it because they could have 80% crap articles as filler content and long-tail traffic generators without negatively affecting their short-tail rankings for the 20% of their content worthy of being featured on the forward-facing site.

Panda changed ALL of that. Now even a small percentage of crap content can pull down rankings for an entire site, including your award-winning, trusted resources with links from dozens of high-profile media outlets.

If you have an eCommerce site and don’t have content on category pages you are three years behind. If you have an eCommerce site and the content on your category pages consists of filler like “This is our blue widget category where you’ll find the best blue widgets to choose from…” then you’re only two years behind and should read this. If you have an eCommerce site with useful content on category pages that helps the shopper decide which sub-category, brand page or product page to click on next then I’d like to shake your hand because you know what’s up.

Does Your Content Deserve to Rank? Sometimes you just have to give yourself some tough love. Five years ago I might look at keyword use, internal and external links, how long the page has been up and other on-page factors like header, alt and strong tags to make this determination. These days I have to read the content and pretend I am a searcher who arrived at this page from Google after typing the targeted keyword. Does this page answer my question, entertain me, or otherwise provide me with the content I was looking for with that search? Or is it simply “good enough” to rank “for now” with the intention that I’ll either click on an ad, buy something or go to a different part of the site? This is the crux of the matter because now “good enough” isn’t good enough.

An Aside: What Makes a Good SEO These Days?

I really do feel for anyone who is just getting into this industry. Those who come from a technical background will likely have trouble with the PR/Marketing aspect, and those who come from a Marketing/PR background will likely have trouble with the technical aspect.

Everything is moving at warp speed and the tasks that fall on a typical SEO’s plate keep stacking up. I get overwhelmed and I’ve been doing this for about eight years now – not as long as some, but long enough to see some big changes. I can only imagine what the marketing team intern feels when they’re hired on full time to “do SEO” and get shipped off to their first conference to “learn how”. It must be similar to trying to take a sip of water from a fire hose.

So what makes a good SEO these days?

I’d say the same thing that made a good SEO five, eight or ten years ago: Someone who loves to learn. Someone who gets bored easily and needs to be constantly challenged. Someone who can think with both sides of their brain.

Now back to the list of SEO tasks. Let’s see, what shall we stack on next…

Sitemap Changes

XML Sitemaps: While not “new” I do remember when they didn’t exist and a “sitemap” meant a bunch of footer links, an HTML sitemap page, or a visual site map of pages for use in designing and developing a new site. Now there are many different types of XML sitemaps and several approaches to implementation.

Sitemap Segmentation: Made popular by Vanessa Fox and authors, submitting a separate sitemap for different sections of your site (e.g. eCommerce categories or top categories and sub-categories) can give you some insight that a single sitemap cannot. For instance, if your indexation rate is low you might want to know if that is a site-wide issue, or specific to one section.

Vertical Search Sitemaps (News, Images, Video…): What started off as a single XML sitemap for HTML pages, then grew into multiple sitemaps for each content type, then grew into multiple segmented sitemaps for each content type (each with their own markup), has now evolved into a single sitemap in which you can mix and match content types. #FullCircle?

Social Integration

I remember when social media marketing was just an offshoot of SEO. We would submit to sites like Digg and Reddit, then “network” with our friends to get the word out so people would vote for our content so we could get on the front page so we would get a firehose of traffic that often would crash the servers (the only time in my life when I remember being happy about crashed servers) so we could get a ton of links so we could rank higher. I remember when we hired our first social media expert because it became too much for me to manage on my own. And since that time social has grown up to be its own channel, completely independent of, yet inextricably connected to, search. Here are some of the new tasks and considerations that we need to make given the state of Search and Social today…

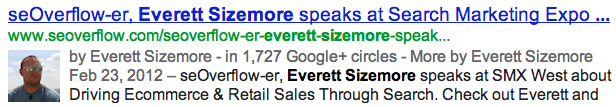

Rel Author & AuthorRank

What if an article ranked well not just because of the keywords in the content, or the amount and quality of links, but just because of who the author was? What if you were your own brand and your brand followed you around the web, adding credibility to the content you write? What if you could increase CTR in the SERPs just by adding a few lines of simple code and linking to the site from your Google+ profile? These questions highlight the importance of using rel author / rel me tags and getting your mugshot in the SERPs next to your content. This is one of those tasks that we’re thankful to have added to our priority list as SEOs.

There are multiple ways of handling rel author markup at this point, though I hesitate to mention them specifically because they tend to change on a weekly basis. My personal site esizemore.com is a single author WordPress site so I simply put the Rel=”me” tag in as part of the site-wide template. You can see it in action with this query. The seOverflow site has several authors so we opted to go with the rel=”author” link to our bio pages, which then link with rel=”me” to our Google+ profiles. You can see that in action here. In both scenarios you need to link back from the Google+ page under the “Contributor” section to the site where your rel author markup is located. Otherwise I’d just put Danny Sullivan or Barry Schwartz’s rel me link on everything I write.

Below are a few resources you may find useful when sorting through the different rel author markup options to find the one most suitable to your needs…

- Google Webmaster Tools Info on Author Markup

- One of AJ Kohn’s Killer Posts on How to Implement Rel=Author

- Rel Author and Rel Me on WordPress Sites

- AJ Kohn on AuthorRank and Agent Rank

- Author Rank: What You Need to Know, on the Raven Blog

- Bill Slawski on Microsoft Author Rank

- Google Help Forums: Authorship Markup

Social Signals as Ranking Factors

Both Google and Bing have publicly stated that they use social signals as ranking factors, and tests have shown that Facebook and Twitter shares/likes are at the very least “correlated” with higher rankings. Though there have been mixed signals from Google about whether G+ counts have a direct impact on rankings, I think the question is almost moot since they have an obvious impact on personalization, which in many cases translates to rankings.

Google Search Plus Your World (SPYW) and Personalization

Google has been personalizing search results for many years and they just keep getting more personal. First it was for users who were logged into Google accounts, or just because of their geographic location. Then in 2009 they started personalizing results even if you weren’t logged in. I think at that point many of us started using plugins that add the pws=0 parameter into search query URLs, but even that doesn’t work if you dont’ have Instant Search turned off. Last year (2011) Google started blending social search results in with the universal search listings using a variety of social networks. There was data-drama between Google, Twitter and Facebook, which many believe was the catalyst behind what was to come next…

Google SPYW changes your search results based on your social connections and activity, specifically on Google+. You can turn it on/off and set some basic personalization preferences. The heavy-handed approach to the way Google+ and GoogleSPYw were implemented in the SERPs has made it virtually impossible for SEOs to ignore social signals as a ranking factor for logged-in users. Google Fellow Amit Singhal said at SMX London last month that GSPYW “is the first baby step to achieve Google’s dream” and that data shows users like personal results. In other words, search and social are married in the church of Google and they’ll be having a family soon. This could be good for marketers who build their brand and social channels because when “users like” a new SERP feature it also lifts clickthrough rates for early adopters.

As an added SEO task now instead of worrying about how many links you have and the keyword use within page content, as well as crawlability and other technical issues, we need to think about the social graph. How many people have you in their circles? What words do you use in your G+ introduction? Should we put a G+ button on client sites? Does every client need a Facebook, Twitter and G+ page and, if so, how do we optimize them and how do they interact with each other and the main site? How do you implement the “Rel Publisher” tag? How do Google+ pages interact with rel author thumbs in the SERPs (e.g. does being in more circles make it more likely that your thumb will show up?) and so on and so forth…

Rather than reinvent the wheel, I’d like to link out to some folks who know more about the unfortunately-named Google products and some of the things SEOs need to think about in regard to them…

- AJ Kohn’s Ultimate G+ / Gspy Post

- Danny Sullivan’s Search Plus Mega Post

- Why Every Marketer Needs a G+ Strategy

- Lisa Barone’s Humorous and Insightful Post on Big Social Search Babies

- Real Life Examples of Search Plus Result Shifts

- Lee Odden on SEO Tips for Search Plus Your World

Google Places Moved to G+

I wasn’t sure whether to put this under local or social, but you can read up on it here. A lot of agencies out there are probably thinking the same thing. Does our local search person still handle this, or does it move over to the social media person now? Either way I think the local search SEO is going to have to get into social (if they hadn’t already) and the social media profile manager is going to have to get into local search. I feel sorry for them both.

Usability and User Experience

Both in terms of the user experience on Google, as well as on your site, SEOs are having to think about human beings more than search engine bots and algorithms these days. Here are a few of the things that have landed on our plates as of late…

On-Site UX: Probably for several years, but certainly since Panda, providing the best user experience has become an increasingly important part of the job. This is due to user feedback signals available to Google. We can theorize about what they are and how it is collected (Chrome, deal with Firefox, Logged-in Google accounts, Gmail links, Analytics, Etc…) but the fact is Google has massive amounts of data pertaining to how users react to your site as a whole, as well as links to your site, and your site in the search engine results. They know who visits; how long they stay; what other sites they visit; what queries they used before, during and after visiting your site; where else they are interacting with your brand, and a whole host of known and unknown useful pieces of data. You can fool a bot pretty easily, but it’s tough to fool a user. Factor in how algorithm updates are being evaluated by quality raters who are asked to determine the relevancy and trustworthiness of a set of results for given queries and it becomes quite apparent that the ups and downs we see in the rankings, though not directly affected by any one person, are heavily influenced by user behavior, which is heavily influenced by the experiences they have on your site. And thus user experience has become “an SEO thing,” which is why we have a conversion team at Inflow.

Poorly Performing Pages: Whether Google uses Analytics data in their algorithm as a ranking factor is beside the point here. What is important is which pages and keywords are providing a poor user experience. I used to look at pages and keywords to see what was ranking and how I can improve rankings. I still do. However, nowadays I look at UX metrics like time on site, average order size, pages per visit, bounce rate, etc… to determine which pages or keywords aren’t doing well. Then I try to figure out what the problem is. Sometimes it’s a poorly matched keyword / landing page combination, in which case I update the internal and external linking strategy, as well as on-page factors, to help a better page show up or to improve the page that is already ranking. Sometimes it is a slow loading page, an ad or fly-out nav that covers up the content, or a broken HTML tag causing something not to work. It could be any number of things that make a visitor from the search engines decide they don’t like what they’ve found. If someone had told me six years ago that changing an image from an old white lady to a young African American would affect rankings for a product page I’d have thought they were crazy. And probably racist. But now an SEO really has to know who the target demographic is because you need to give those users the experience they want. While the image change is an extreme example, you can bet that a pop-up ad you can’t close will send your user experience metrics plummeting faster than a social media company’s IPO.

SERP Changes: We can’t possibly cover all of these search engine result page users experience changes in the scope of this post, though we’ll touch on certain ones elsewhere. It is sufficient to say that if there’s one constant about Google SERPs these days it’s that they’re always changing. From the Google maps / local places listings that seem to change on a weekly basis; to the addition of more universal search elements from vertical search; to the real-time search feature that lasted from December, 2009 to July 2011; to author thumbs; to various schema markups; to choosing their own title tags for you; to breadcrumbs; to bulleted lists; to organic Google Product Search Shopping results, which are soon to become pay-to-play; to “Search Plus Your World;” to the new “Knowledge Graph;” to big site links and little site links and if my fingers had lungs they’d have passed out from lack of oxygen several semicolons ago. For the sake of my arthritic future, I’m hoping you get the point. This is a LOT to keep up with!

Rel Canonical (single domain and cross-domain)

This is one of those tags that was introduced to solve one problem and ended up creating a half-dozen other ones. I know of sites that were cruising along just fine until one day someone implemented rel canonical tags incorrectly with disastrous results. Some SEOs insist that every page has one. Some tend to ignore them altogether for the problems they create and seek instead to fix the problem instead of the symptom. Most of us do a little of both depending on the situation. Either way we need to A: See if a site uses them or needs them, and B: make sure they are implemented correctly.

Some Rel Canonical Issues to Look Out For

- Self-referencing cross-domain rel canonicals: Each domain references its own version of the content. This happens often when the rel canonical code uses a relative path and multiple domains access the same code

- Self-referencing rel canonical tags on non-canonical pages: Similar to the above issue but on the same domain

- Conflicts with other tags: Rel canonical on ?page=2 pointing to the first page, but first page is indexable and page two is noindexed. There are many other examples of conflicting tags in this way, including rel next / prev and canonical view all pages

- Multiple rel canonical tags on a single page, sometimes with different URLs: I’ve seen this happen when one tag was hard coded at the page level and another was an include in the header.php file or some other site-wide implementation conflicting with a page-specific implementation

While the rel canonical tag did solve some issues for SEOs it also made our jobs significantly more complicated and added several other potential issues for us to diagnose when things go south.

Recommended Reading:

- Webmaster Tools Help on Rel Canonical

- Guide to Rel Canonical – How To and Why Not to Use It

- Solving Duplicate Content Issues

- Rel Canonical Vs 301 Matt Cutts Video (also listen to what he says about 301s losing some PageRank)

- Cross Domain Rel Canonical Support

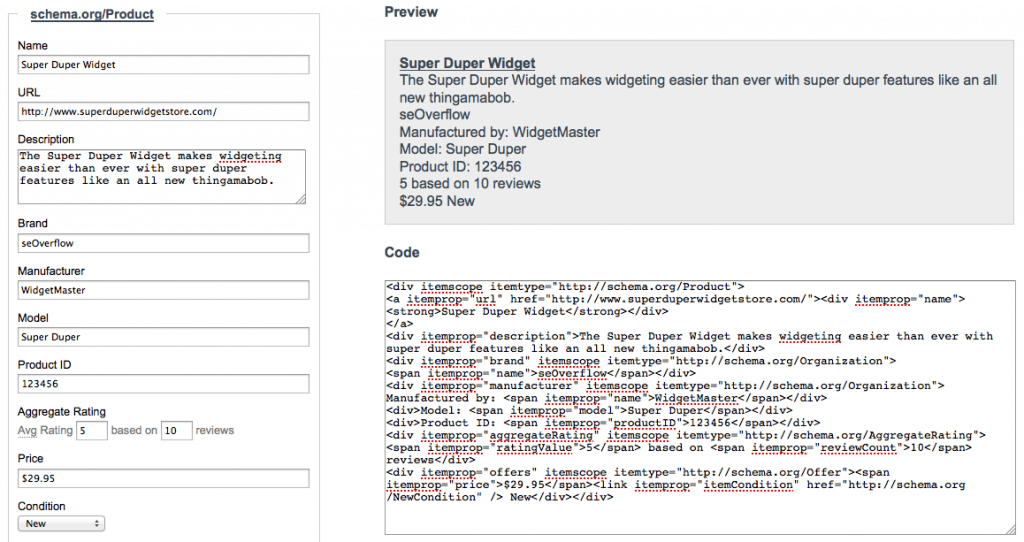

Micro-Formatting, Rich Snippets and Structured Data

hCard, XFN, HTML5 microdata, hReview, Rel tags, Schema.org, RDFa, rich snippets, structured data, micro-formats, the semantic web… Oh My! You may already know all about this stuff, but if it makes your head spin it helps to think about it this way: Just because you know that 555-123-4567 is a phone number doesn’t mean Google does. As far as they know it could be a SKU, a serial number, or a song title. All of these acronyms and new phrases are essentially about dealing with that problem in its many manifestations. The fact that there are so many options and so many ways to implement them is just one more thing we have to deal with as SEOs. Dont’ feel bad if you have yet to get a full grip on them either. Google is having trouble too.

Which is Best? (RDFa, HTML5 Microdata, Microformats…) Personally, I go with Schema.org markup, which was a collaboration by Google, Bing and Yahoo to bring together structure data markup that all three search engines can support. Schema.org includes schemas that cover most situations from products, events, organizations, people, places, offers, ratings and combinations of some of those things in addition to many others. Google also recommends HTML5 microdata as a way to mark up information.

Must-Have Microformatting Links:

- Google Rich Snippet Testing Tool

- Google Webmaster Tools Rich Snippets Help

- Schema.org Schemas

- A New Approach to Structured Data

- Also see the example in the eCommerce section below…

My first encounter with contributing to the semantic web: I remember the day it was announced that Google had purchased MetaWeb, the startup behind the ambitious FreeBase.com project. I was working for Gaiam at the time (Disclosure: now an seOverflow client) and I immediately went over and started connecting the dots between Gaiam’s various brands, instructors who appeared in Gaiam workout videos and more. Among other things, I was able to describe all of the business units and tell Google that the yoga instructor Rodney Yee was married to Colleen Saidman, both of whom were yoga instructors for Gaiam, which owned the brands Gaiam Yoga Club and Spiritual Cinema Circle, which were started in this year by this person in this city, and here are the products they sell or the services they offer. It is all transparent. Imagine my lack of surprise when Google announced a year later that they would be using structured data to help them figure out relationships in the semantic web. Add this to your task list: When Google buys a company you should look into it. They do it often, and they always do it for a reason.

Navigation & Pagination

The rel canonical, crawlability, navigation and pagination tasks and tools all blend together in many aspects. Learning what they are, what they’re for and how they interact with each other can be like untangling knots in fishing line sometimes. Add to that the aspect of figuring out which tool, or combination of tools, is best for each situation – or diagnosing what went wrong post-implementation – and you can see why many SEOs spend a lot of time dealing with these things.

Rel Next / Prev: This tag is a good way to consolidate the power of multiple paginated pages into the first page in a paginated set. It fills in a few gaps left open by nofollow, noindex and rel canonical tags, which were typically used to solve a variety of pagination issues. You can use rel next / prev in addition to, or in place of, these other tags, depending on the situation. The first thing you may need to know is that the tag goes in the header of the document, rather than on the pagination links themselves, which does make implementation quite a bit more tricky. Read upon these tags here and here.

View All Canonical: This is a good alternative to self-referencing rel canonical tags on each paginated page. While you wouldn’t want the rel canonical tag on page 2 to point to page 1 as the canonical, you could have ALL pages point to your “View All” page as the canonical. I’ve had mixed success with this. On fast-loading content pages it tends to work well. On category pages with hundreds of products it tends to not work very well. All I can say is to experiment, pay close attention to category page traffic and rankings after implementation, and read this post carefully before venturing down the View All Canonical road.

Faceted Navigation Strategy: I typically see faceted navigation used on eCommerce sites. It can be very useful for shoppers and can improve conversion rates and average order volume. However, it is also a bloody nightmare from an SEO perspective. Faceted navigation is when you can filter any given category in several different ways (e.g. category, price high to low, price low to high, color, best sellers…). This is different than the typical “Sort by price” option that adds a single ?sort=price parameter to the URL. Imagine shopping for blue shoes and being able to access a category page by going to the blue category followed by shoes filter, or the shoes category followed by a blue filter. Then you can add on parameters or folders to narrow the selection by price, feature, ratings, offers, brands… and once you’re in brands you can re-sort by all of those other parameters all over again. If you want to see this in action, go printer shopping at BestBuy.com and pay attention the the URLs as you sift through the options. Some content management systems treat the category navigation and faceted filters separately, which makes fixing the issue rather easy, though you may limit crawling and lose some link equity going into those faceted URLs. It’s when the faceted filtering menu ISthe navigation that you run into major problems.

I don’t have a one-size-fits-all approach to faceted navigation, but generally I will try to limit the depth at which search engines can crawl and/or index the content while working on ways to make the primary, canonical category page rank higher with unique content. One example might be to use wildcards in the robots.txt file combined with a special parameter added at the third filter to limit crawling to only two levels deep, while making sure the primary category page is the only one with static, textual content and that all other pages link to it via breadcrumbs. For example…

User-agent: *

Disallow: /*crawl=no

Once the user applies a third filter to their product search ?crawl=no gets added into the URL as a parameter. You can also play with robots meta noindex and nofollow tags for added protection, but the more complicated you make it the easier it is for something to break.

Other options include Rel Canonical tags to the main category page (which could limit crawling but may be the best option in certain circumstances), a View All Canonical page (see above), or a combination of different tools. I highly recommend reading this post on SEOmoz by Mike Pantoliano if you ever have to deal with faceted navigation.

In the wise words of Douglas Adams…

DON’T PANIC!

You don’t need to know everything there is to know about every aspect of SEO. We’ll get to more about how to keep your sanity, and your job, in a climate of warp-speed change later. In the meantime, keep working on building a strong foundation because… well a picture is worth a thousand words.

<— #GoodFoundationFTW

eCommerce SEO

eCommerce SEO is something I’ve been heavily involved in over the last few years. I wrote a post back in 2009 that won the SEMMY award for Best SEO Blog Post of the year, and though I’m proud of the post I have to cringe when I read it these days because SO MUCH has changed since then. I really need to go back and update it. Below are a few things that have changed for SEOs who work with online stores…

Google Merchant Center / Product Search / Shopping: I started this post back when Google Product Search aka Google Shopping was free and getting your products listed was as easy as submitting a valid feed. Posts like this still have value, but now Google Shopping comes with a cover charge. Again, this development happened between the time I started writing and the time I published this post. That is how fast we’re moving folks. I hope you brought some dramamine.

Schema Markup: As covered in the section on microformatting above, I prefer using Schema.org markup for product information and product reviews. This is the place to start when looking into the markup options. It can get a little confusing when you drill down into the review schema and see the breadcrumb change from Thing > Product on one page to Thing > Creative Work > Review on the next.

If you haven’t worked with Schema.org markup for products yet, here is what I’d advise in chronological order…

- Go here and read up on the product schema and all available attributes.

- Take your structured data and paste it into the HTML field here.

- Take a screenshot of the Google Search Preview snippet and send that, along with a txt file with the code, to your developers.

- Say please and tell them how awesome they are.

Product Descriptions: Writing unique product descriptions has always been a best practice. However, when you deal with enterprise eCommerce sites that have hundreds-of-thousands, or millions, of products it isn’t exactly a scalable task for in-house copywriters. It used to be that you could write custom copy for the best-selling products, say the top 1,000, and let the rest rank where they may. Depending on how authoritative the domain and trusted the brand was you could bring in a lot of long-tail traffic from those pages, especially if some of them had a few user-generated custom reviews. However, since Panda came around you really can’t afford to do that. Those low quality or duplicate content pages now have the ability to pull down rankings across your entire site, including those fantastic 1,000 top-shelf product pages you spend months optimizing. Furthermore, you can’t afford to have thousands of little accessory product pages that have little or no description at all.

You are faced with a choice: Either get unique, useful content on these hundreds of thousands + pages, or keep Google from showing them in the search results. Given the scaleability issue, you can guess which camp most enterprise-level eCommerce sites are falling into. I think this is very unfortunate and makes the web a worse place, but I don’t own a search engine so all I can do is react and complain provide constructive criticism. A word of caution: I have found that simply putting a robots noindex tag in the page’s header won’t do the trick. You may have to think outside the box. For instance, I know some people who have had success with forcing their low-quality pages to show a 404 status code, even if they really do exist. Others have combined robots.txt blocks with robots meta blocks. Ideally, however, you need to have unique, useful copy on every product page. Never use the description supplied to you by brands, manufacturers and distributors if you can help it.

Here are a few other things you have to worry about now:

- Product Feeds (How to generate them, where to submit them, and how to keep it from plastering duplicate content across dozens of channels and thus killing your product page rankings when your Amazon or eBay store outranks you with your own description and the partner channel manager or affiliate manager takes credit for all of those sales while the executive team blames you for lost rankings and organic search revenue drops. Can you tell I’ve been there before?)

- Paid Inclusion in Google Shopping (This used to be an organic channel. Now that it’s pay-to-play whose job is it?)

- Google Trusted Stores program (It isn’t as easy as just applying. Though free to all merchants now for now, you need to know how you’re going to supply Google with regularly updated information like when and why an order gets cancelled. And once in you need to maintain high standards like 90%+ on-time shipping. My advice: Let someone else handle this channel. You have enough to worry about without dealing with customer service issues too.)

- Faceted Navigation Filters (See above Navigation section)

- On-Site Reviews (Via third-party solutions like BazaarVoice and PowerReviews, or a home-grown solution)

- Off-Site Reviews (From sites like PriceGrabber, epinions, rateitall, viewpoints and more. These may affect product rankings, especially on Google Shopping, so you need to know how to get lots of positive reviews without violating guidelines. Here’s a hint: Don’t ask the person who received their order late and had to send it back three times before they got the right color.)

International SEO

We recently finished a strategy and recommendations document for a US client looking to break into international markets. The scope was to discuss all of the different options, including pros/cons and best practices, and then to make implementation recommendations for best practices specific to the option they ended up going with. The three primary options haven’t really changed in the last few years. They included, in order of preference: #1 Separate CC TLDs for each country; #2 One TLD (e.g. .com) with sub-folders (e.g. /france/); and #3 One TLD with subdomains (e.g. france.globalsite.com).

However, while the three primary options haven’t changed much, EVERYTHING else has. In fact, I started the document in mid-May and by the time I’d finished the first draft a week later the game had totally changed in regard to even the most recent developments. Specifically, while I was recommending the painful process of bulking up the code on every page by using multiple rel href lang tags (see below) in the section of every page, Google finally figured out that it would be much easier to just do this in the XML sitemap.

So just some of things to consider with an international site:

- CC TLDs, Subdomains or Subfolders

- XML Sitemap segmentation

- Webmaster Tools geotargeting

- Origin country homepage (e.g. US) or global homepage

- Single rel canonical per page, or self-referencing rel canonicals

- Country-specific pages, Language-specific pages or both

- Auto-detection of geolocation or not

- Auto-redirection to geolocation or not

- Rel alternate href lang tags for Google

- Meta content-language tags for Bing

- Interlink all of the international pages to each other or not

- Auto translation (e.g. Google Translate) or custom-written content

- Geographic location of web host

- A host of other factors, like cultural, budgeting and future-proofing considerations

Rel HREF Lang

While Bing seems to do pretty well with the meta language tag, Google has recently said they prefer the rel alternate hreflang tag. As I understand it, the tag can be used for defining pages with completely different languages, the same “language” with different spellings and geotargeted areas (e.g. UK and US or Spain and. Mexico), or when you translate the page template (header nav, footer, sidebars…) but the main content is in a single language. One important thing to remember is that the rel href lang tag for each country/language needs to go on all other pages. So the rel alternate hreflang tag referencing the Spanish page needs to be put on the English, Chinese, German… and all other pages. As you can imagine, having separate headers for all of these different pages is a hassle. Google answered this with support for rel alternate href lang tags in XML sitemaps.

Check out…

- Rel Alternate hreflang tags

- Rel Alternate hreflang tags in XML sitemaps

- International SEO with Rel=”Alternate” Hreflang=”X”

- Multilingual and Multinational Site Annotations in Sitemaps

- Pretty much anything you can read by this guy (Andy Atkins-Krüger)

LOCAL SEO

Local Search has always been a strong area for seOverflow because Mike Belasco is a frequent speaker at national and international conferences on the topic of local SEO. I can’t keep track of how often this changes. It is just insane and, quite frankly, I’m going to leave it to Mike to pick up on this in a separate post (coming soon) about all of the changes that have happened recently in local search. Until then, here are some undoubtedly out-of-date local search rankings factors to which Mike contributed in 2011. Also, check out David Mihm’s “A Brief History of Google Places” timeline.

An Aside: Dealing with Frustration

All of the tasks we have to do, and the seemingly endless series of paradigm-shifting algorithm changes, features, products, guidelines and directives streaming out of the GooglePlex and elsewhere are enough to drive you insane. For me, local search is a particularly sanity-consuming area of search. For this reason, I choose not to study it. While I’ve learned a lot through osmosis just by working with experts like Mike Belasco and Dev Basu, and talking with good folks like David Mihm, Andrew Shotland and Miriam Ellis when I have the chance, I know that local search isn’t my cup of tea. If I tried to know as much about local as I know about eCommerce I’d probably just throw my hands up in defeat and start a new career farming sheep.

Link Building

We will be expanding on the changes in link building in future weeks, as our link building expert Alex shares his latest tips. In the meantime, here are a few of the new considerations we have…

- Google recently started deindexing private link networks and even some SEO companies allegedly associated with them

- One Word: Penguin

- A great idea that Matt Cutts said at SMX Advanced 2012 that Google is now considering

- Negative SEO: Whiteboard Friday

- Negative SEO: Aaron’s Take

- Explosion of XRumer style link building (I hate this crap)

- Google Unnatural Link Notifications

Stay tuned for a more in-depth post about link building changes because this is one area that has had massive and frequent disruptions over the last few years. Also, see my rant above about PR Vs. Link Building ROI and you’ll have a pretty good idea of where I think this part of the industry is heading over the next 2-3 years.

Analytics

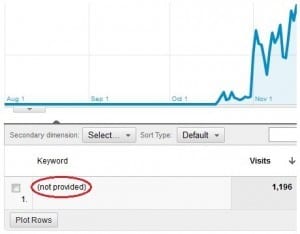

As analytics has become more complicated and useful, we’ve also lost a lot of data. This includes Yahoo Site Explorer (not analytics, but useful data we no longer have) and most importantly the infamous (not provided) data. Here are some analytics changes and analysis tasks that have landed on the SEO industry’s collective plate…

(not provided): You need to know how to get information out of data that is (not provided) and there are several good articles out there about exactly that. This is the single biggest analytics shake-up since Google bought Urchin and turned it into Google Analytics. While Google told everyone they probably would only see single digit percentages of (not provided) traffic, things quickly grew well beyond that and into double-digits for at least half the sites, and up to 20+% for many. These days it is rare to find a site that doesn’t have (not provided) as the top referring keyword, and often the top converting one as well. What a pity. Google claims this was a privacy issue, but if you don’t mind a bit of sailor talk here: That is bullshit. They still give the keyword data to paying AdWords customers so what Google is essentially saying is “Your information is private, unless someone buys it off of us.” If you read that in a privacy policy on any other website you’d close your browser and never visit that site again. As SEOs we need keyword data. It is our lifeblood. I’m not sure what else to say about this development, other than it makes me very angry.

Filters Galore! I’m a big fan of Google Analytics filters and segments. While I do miss the day when installing analytics from any major vendor was as easy as copying and pasting a piece of code into your template, setting up filters and segments to wrangle more information out of Google Analytics data is definitely worth the time. Here are a few resources that may help you…

- Creating a Filter for Image Search

- Track Rankings in Google Analytics

- Another GA Rank Tracking Filter Setup

- Obtaining Full Referral Data in GA

- 4 Ways to Recover (not provided) Data

- Just Use This Search for Many More

I can’t possibly list all of the great filters and segmenting options out there, but showing the full URL, and tracking rankings have been very important for me. Just to give a real-world example, one of my favorite reports to run is what I call the “Page Two Performers” report, but it could also be run as a “Below the Fold Performers” report. Simply show all keywords that are sending a reasonable amount of traffic and/or conversions from the second page of Google (or from spots #6-#10). Set the threshold in a way that gets rid of most of the “noise”. For instance, if a keyword on page two brought in two searches, one of which converted, it will show up as 50% conversion rate. Don’t get too excited about a sample that small. I typically make sure that there were at least 20 visits in a month from that keyword, but that changes depending on the site and their overall traffic levels. The idea of this report is simple: If those keywords are sending traffic/conversions despite less-than-ideal rankings, imagine what they could do with just a bit of a boost? These are “diamonds in the rough” and will be my focus keywords and landing pages for the coming month in terms of internal/external link building an on-page optimization.

Real-Time Analytics: Several companies have offered real-time analytics dashboards in the past, and Google Analytics added it to all accounts in 2011, making it easy to see what’s going on during major traffic spikes after, for example, a TV commercial or interview airs.

Multi-Channel Attribution Goes Mainstream: It isn’t always as simple as knowing that a user came from search and used this or that keyword, then converted on the site. Likewise, it isn’t always enough to know that someone arrived from a display ad. Some businesses, particularly at the enterprise level or those with complex affiliate lead generation relationships, need to know what the first touch and last touch were. Some businesses may decide to attribute the conversion to the first touch, others to the last touch, and still others will break up the conversion and apply a percentage of it to each. As if analytics wasn’t complicated enough already. But to make things easier, LunaMetrics has provided an attribution modeling tool via Google Docs.

Webmaster Tools: Major changes have happened in both Google and Bing’s webmaster tool dashboards. In fact, Bing made some big changes as I was writing this. Here are a few Google / Bing WMT changes, some of which create new tasks for the SEO…

- Google has been sending more messages to webmasters via GWT, including unnatural links, spam, major traffic drops, etc.

- SEOs now have control over parameter handling with both tools.

- SEOs now have control over geotargeting in GWT

- Bing added Link Explorer data in the Phoenix edition of BWT (presumably to replace lost YSE data)

- Bing added an SEO analyzer in the Phoenix edition of BWT

- You can fetch as Bing or Google bots, which is especially useful in identifying potential cloaking issues

- Both tools now have URL removal tools

- Reinclusion requests, including lots of clarification on manual Vs. algorithmic penalties

Paid Search

Admittedly, PPC is not my strong-suit. However, here are a few of the things I know to have changed off the top of my head…

- Search Retargeting: SEO and PPC have always worked well together. For instance, you could test some keywords in the paid search arena before spending months going after them in organic only to find out they don’t convert for you. Search retargeting presents yet another way to leverage organic search data for paid search profits by paying for ads shown to users who have previously searched for your brand or keywords. As you can imagine, this opens up a big attribution model can of worms, but can also be extremely profitable.

- Google changed the free Product Search to Google Shopping, which is now Pay-to-Play

- Mobile ads took off in 2011

- Call tracking on PPC ads

- Multi-channel funnel analysis and new attribution models

- New paid search channels open up on social media sites (e.g. Facebook)

- New “automated rules” in Adwords make life a little easier for those not using bid / ad management software

- Quality Score now showing in adCenter interface

- GPlus +1 buttons showing on paid ads

Stay tuned for a more in-depth look by our resident PPC guru Billy Overin at what has changed in paid search over the last couple of years.

Mobile Search

Just what is a ‘mobile device’ anyway?

This question used to be pretty simple to answer: A phone. These days our phones are computers; our computers are phones; tablets are laptops; laptops are tablets; everything is a TV and almost everything is “mobile” in the portable sense of the word.

Mobile Explosion: There are 5.9 Billion mobile subscribers (87% of the world’s population) with over 1.2 billion mobile web users, accounting for about 8.9 percent of visits to websites globally (source). People don’t worry about using too much data with unlimited data plans being the norm. Websites now render very well on most smart phones and tablet devices, making the browsing and shopping experience not all that different from being on a desktop. If none of this sounds like “news” to you, that is precisely the amazing part! Accessing information on the web from a mobile phone was so difficult and expensive when I started doing SEO that it just wasn’t something we had to worry about, though there was a lot of talk at conferences about the coming mobile revolution. Over the last eight years it has gone from that point to the point of Mobile SEO sessions being among the most consistently packed rooms at every conference – because we need to know this stuff!

The Impending Death of Apps?

I did some work for a coupon site not long ago and they had a really great mobile coupons app for the iPhone and Android devices so their “Mobile” page consisted of a big call-to-action asking visitors to download the application. Not long ago if people were searching for “Mobile Something” it would be perfectly fine to ask them to download your app. However, in looking at the click-paths and digging around in some of the keyword-level metrics, it became apparent that the vast majority of visitors did not want to download anything at all. They were on a mobile device surfing the web and wanted what they wanted right away. We decided to move the app download CTAs over to the sidebar and populate the main content area of the page with coupons that were accessible (and usable) from a mobile device without having to download the app. I can’t share specific metrics, but suffice it to say that we made the right decision. You can see the page here. The point is: If you have a game or some multi-featured app that can’t be accessed from a mobile browser then perhaps an app is the best way to go. But the days of having apps built simply to show a bare-bones version of the same content you can find on the website is probably coming to an end. #ThankGod PS: Five minutes after publishing this I saw a tweet from AJ Kohn linking to a great ZURBlog post that also supports this probability.

Google’s Advice

As yet another example of how fast things change in this industry, I wrote the Adaptive Design paragraph below on Wednesday, June, 6th 2012 around lunch time and by 2pm ET Barry Schwartz, while covering the iSEO panel at SMX Advanced, wrote that Google’s Webmaster Trends Analyst, Pierre Far, finally gave Google’s “official” stance on mobile SEO best practices. You can read the whole thing here, but the short version is: Use responsive / adaptive design.

Adaptive / Responsive Design

Not long ago it was ok to send people to a separate mobile “version” of your site, but these days more and more mobile experts are touting Responsive Design (some call it Adaptive Design), which avoids the problems of duplicate content, worrying about auto-redirects, unintentional cloaking, and the fact that some tablets are more like laptops and some phones are little tablets.

Other Stuff

Basic On-Page: Even the most basic on-page tactics have changed. I no longer write title tags for search engines. I write them like they are sales copy and if that means not fitting a secondary or tertiary keyword, or a keyword variant into the tag then so-be-it. The days of listing out keywords separated by commas, pipes or colons are gone – long-gone IMO, but looking at the SERPs I’d say about 90% of SEOs haven’t gotten the memo yet.

Negative SEO: Are people buying crappy links to try and get your site banned or caught in a Penguin filter? Are people paying Mechanical Turks to drive up the related query count for things like BrandX Rip Off and BrandX Scam? If so, what do you do about it?

New Crawlability Developments: Yes, Google can see and execute javascript. Yes, Google can and will see stuff that you have blocked in the robots.txt file (such as that script you think hides all your affiliate links) because the robots.txt blocks robots, not browsers and Chrome is a browser. Additionally, Google Preview Bot needs to render everything on a page in order to generate a preview. And since when did Google actually care about anyone’s privacy or copyrights other than their own? How do you deal with AJAX sites and what in the world is a HashBang?

Site crawlability has always been a major SEO issue. The more complicated the code gets, the smarter the bots get. The smarter the bots get the more complicated the code gets. And as bots get smarter and code gets more complicated SEOs have to keep piling on little pieces of information and learning new skills.

Video Search: Getting video thumbnails used to be difficult. You had to use your own player or come up with some creative ways to get around the fact that Google didn’t want to show thumbs for pages that only had embedded YouTube videos from YouTube.com. Nowadays all it takes is an XML video sitemap (sometimes not even that) and Ta-Da! You have yourself a video thumbnail. And another task gets added to the list.

So SEO is dead?

Hardly. If an exponentially expanding task list is in any way associated with job security (and I think it is), SEO is a great field to be in for the foreseeable future. While those who fail to adapt are going to be looking for a job, everyone else has plenty of work to do. If your plan is to adapt you’ll need to incorporate the following two words into your SEO life:

#1 – FOCUS

I think we’re going to need far more specialists, and those who remain generalists will have to know who the specialists are so they can outsource as needed (see #2). That is why one of seOverflow’s goals for our entire team is to find out what most interests each of us and to encourage everyone to delve deeper into that specific area. For me it happens to be eCommerce SEO, but I’d also love to be an “expert” in video search, and schema markup while keeping my technical SEO skills razor sharp. In the end, I may have to give up on video search in order to stay up-to-date on eCommerce.

As long as you know what you want to focus on and make it a priority to learn everything you can about that one area you will have a career in SEM / SEO / Internet Marketing / Competitive Webmastering / Inbound Marketing…

Or whatever they’ll call it next.

#2 – TEAMWORK

The dilemma is that you’re not going to be able to know how to do everything, but everything has to be done. For agencies this means you need to get your top SEOs to specialize in areas that complement each other so you can provide clients the depth of knowledge, as well as the breadth of skills, needed to reach the top of the search results.

For an in-house SEO (and really for any SEO) this means being involved in the community, attending search conferences, networking online and knowing who is the expert in whatever it is you need at any given time.

When I have a video search question I go straight to Mark Robertson of ReelSEO. He isn’t cheap and his schedule is tight – and there is a reason for that. I recently had a stumper of a question about eBay and Amazon stores so I asked the folks at Channel Advisor and got the answer I needed. Likewise, I am always happy to answer a tough technical problem or eCommerce SEO question for a friend because I like to help people in the SEO community, and because they usually know things that I don’t – and I’ll be hitting them up with a question soon enough. I’m constantly going back and forth with Andrew Shotland (local search Guru, but also a very strong technical SEO), Marty Martin (had a few Magento questions for him), and my fellow Q&A Associates and Mozzers. If I have an enterprise content or online news stumper I’ll try and get in touch with Marshall Simmonds (Define Media Group, Former SEO for New York Times). Dr. Pete can take a stab at about anything I throw his way. Debra Mastaler can help me think outside the box on building links in tough industries, and our team here at seOverflow is chock full of experts in PPC, Link Building, Local Search, Project Management and more. You do not have to know everything (I certainly don’t), but it helps to be on good terms with people whose opinions and knowledge you can trust regarding the stuff you don’t know. Likewise, when they hit your up for your input be generous.

You actually read all the way down this far? Come find me at a conference because you’ve earned a pat on the back!

I didn’t set out to write a post this long. I just wanted to make a point: You can’t know everything about everything in SEO unless you are way smarter than anyone I know. And if that’s the case, you should probably be working to cure cancer or sending your own rockets into space instead of getting websites to rank higher. Either that or you should be working for the other guys. As for the rest of us, we can know a lot about a few things or a little about a lot of things. In my opinion, the SEO of the future today needs to focus on depth within themselves and their chosen SEO niche, and breadth within their team, community and professional support network. That was my original point, and it only took me about 11,500 words to say it.

What about you?

Each skill you develop is a building block in your career. Which blocks are you going to stack together? I need to know so I can call you when I get stumped in your area of expertise. #Srsly

One thing that many SEO’s fail to do is help a business understand how they can start empowering staff to think about SEO when doing tasks they would do normally.

Few examples include:

Conferences: If speaking at a conference see if you can get a link from the speaker BIO.

Press Releases: Sending out press releases to local news? Syndicate it online.

Press Mentions: Keep track of people who mention your brand online but don’t link to it, reach out & ask for the link.

Sponsorships: Make sure if you sponsor local businesses that the sponsorship includes a mention on the website.

Just a few examples but SEO can be integrated so much more than people think, it’s all about a small shift in mentality (and a little bit of education).

I love these sort of big posts well done Everett, I really understand everything in your article first hand! Especially about what makes a good seo (and that was just an aside!), spot on mate!

This post is the gift that keeps on giving. ;^)

I almost never comment on blogs, but this is just fantastic. I feel like SEO is changing and you really get it!

Reading this wonderful article, makes me feel more inspired and interesting to do my job.

Thanks for sharing this.

¡Now insn´t that a great excerpt on SEO 2012! Printing it out and laying it next to my bed for the bedtime read.

A great way to go with industry is to keep sanity while creating a good foundation and not go crazy after every Google update/Industry novelty/Latest SEO craze. SEO can be as simple as 1, 2 3:

1) Create great content (Like this post).

2) Code cleanly and fashionably

3) Converse with your industry influencers/clients.

I am a former Social Media Manager in the Real Estate Industry and I have now transition to SEO Specialist(in training)for a prominent law firm in Atlanta. I appreciate all of your expertise and your constant reminder that we are ALL continuous learners.

Wondering if you had any advice or suggestions for SEO relating to Legal Services

Thanks Much!

Maurquis,

I do. It starts with not using your client’s website as the URL in a comment on an SEO blog.

Everett, that’s a hell of a post! It well qualifies for a book, you know.

SEO is indeed getting more and more complex, just as life in general. So, thanks for helping us SEO folks (newbies in particular) make sense out of the bulk of tasks one may encounter these days.

I’d only like to add that, as far as international SEO is concerned, one of the challenges it poses is tracking progress in foreign (= personalized) SERPs. And it so happens that we have JUST added almost a dozen personalization parameters (city, region, country, language, Safe Search, etc.) to Rank Tracker which one can set when checking rankings.

To the best of my knowledge, there has been no tool that does it so far. I also know that many SEOs have been on the lookout for a functionality like this, so, the information could be useful to your readers. More detail here – https://www.link-assistant.com/news/personalized-google-results.html

Thanks again for the post!

Alesia,

I went back and forth about approving this comment, but decided in the end that you put some thought into it and the resource you’re linking to could be of value so I’ll let it slide. And thanks for the compliments on the post. I’m glad you liked it!

Thanks for this article — I needed this to keep me focused and on the right track 🙂

Impressive areticle, thank you.! yup sure aint short but you make a lot of very valid points, these days to be good at SEO you almost need to be a content writer aka a techie aka a marketer… and finding people like this is hard especially good ones.

Loved all your references too.

thanks

I usually don’t leave comments but I did read your entire post. Awesome job and thanks for sharing! Yes, I did actually read to the bottom 😛

This is the single best post I have ever come across on SEO. Comprehensive, specific in all the right areas and a unifying theme throughout. Pure gold. Thank you!

Robert

With so many different tasks to accomplish nowadays to perform a SEO work,what kind of management tools / SEO process software are available and really useful ?

Excellent article Everett. Thank you!

I handle the SEO for a large ecommerce affiliate site so this post is gold dust to me. Plenty of work for me to be getting on with! Thanks again.

Just saved this to my desktop as “The Complete SEO Manual” Even if that isn’t completely true, it’s true enough. Thanks for this epic piece.

Wow what a great article.

You must have put a lot of work into that.

As a small business owner who runs my own website it sort of makes you realise that getting seen on the internet nowadays is very hard.

Really great post, may get the “Best SEO post of 2012”! One SEO task that is not really talked about is information architecture, done with SEO in mind. This is extremely powerful for very large websites, driving tons of long tail traffic if done well.

One of the many tasks SEO have to learn now, with more to come!

What a great post! I think it deserves to win you, Everett, one more SEMMY award!!! I’d vote for it.

How long did you write on this article? I’m just curious, cause the article is fantastic 🙂

A cup of coffee later, the jitters from too much caffeine or is it at feeling overwhelmed by the amount of tasks I have to handle for my clients, all I can say is thanks alot. Yes, it is a daunting task to handle this growing list of specialties for our clients, but they need our services all the more. Thank you for reminding us of the challenge, which is our opportunity to serve.

Yep, my eyes did start to glaze over when I got to sitemap stuff. I’m a novice here and I would much rather be out there climbing and skiing. BUT, I pay someone to get it right. How do I know if they’re on the case? To be fair, how can anyone keep up with the Google monster? They’re turning the screws, before long it will be “Pay to Search”.

Easily one of the most informative, jam packed an interesting SEO posts I’ve ever read… love all the useful insights and valuable links. If only the masses could fully understand that really good links are just the ones coming inbound. 🙂 Thanks for the great read Everett.

There were parts that made my head hurt a lil’ but in a good way.

Other parts of the post were downright fascinating.

Then the classics:

“In other words, search and social are married in the church of Google and they’ll be having a family soon.”

“It must be similar to trying to take a sip of water from a fire hose.” lol

Big-Ups to the Moz Top 10

Cheers!

V

Holy cow! This is such an excellent article. I knew SEO was exploding, but I had never taken the time to put down on paper all of the tasks an SEO needs to think about. I knew the list would be huge… but I didn’t think it would amount to 11,500 words.

Ha ha! Now I know why I have had a headache every week for the past 6 months. Thank you for diagnosing my “problem”. Time to specialize further…

Just want to say thanks for this article. Just brought on a bunch of people to the team in the past couple months. Your article just became required reading for the team. The ‘must read blogs’ from the various links here are perfect for any SEO.

I agree wholeheartedly with your discussion about SEO and Marketing convergence. Great post!

Not done reading yet, but one of the best industry articles I’ve read for some time. Good forward thinking. Spot on.

Wow, that’s a lot of good info. It’s no wonder SEOmoz gave this article the top spot in their list this week.

I read through the whole thing and it’s all good but when you say “demographics” my company is mostly a demographic that doesn’t fit. We do SEO for small businesses and I regularly find little to no current information about current SEO trends for those types of companies. Maybe the Local Search article that is coming will help but here’s our problem. We know about how important social media and SEO NOW is (two years ago I was still scratching my head) but a lot of small businesses have a real hard time getting likes, fans, circle members etc…because well no one cares too much about who maintains your neighbors yard, who changes your co-worker’s oil, where your best-mate buys his comic books from, etc…There’s just TOO much stuff to care about and people I think are getting tired of caring. Even a really well traditional marketed company can struggle with social media because once again…who cares?

Maybe I’m being pessimistic and for a marketer that’s not always a good thing but listening to my customer’s customers is a big factor of what I’m saying.

Thoughts?

Justin,

You make some good points. I can only answer some of your questions with an example. I live in a very small town called Floyd in the Blue Ridge Mountains of Virginia. When I was looking for a place to take my truck for repairs, I went with these guys and I’m sure you can guess why…

https://www.facebook.com/joesgarageva

They’re about as small as a business comes in a town about as small as they come (one stoplight in the entire county!). So if they can do it so can your clients. It isn’t easy, but that’s why it works. If it was easy everyone would do it and then it wouldn’t work.

Good luck!

That’s a great example and congrats for them. Very impressive. I have a client that is similar and was savvy enough to get heavily involved in Places reviews three years ago. Their reviews are a center point in their marketing and I love having them in my portfolio. The reason they work is because their owner is web-savvy and only signs checks and networks online.

One real obstacle I run into is that a lot of social networking can only be done by the person that is benefiting from the social networking. Back in the day you could (and I guess still can) hire an SEO to build your link network, do your blog posting, commenting, and forum posts but with social networking it’s very difficult for to hire someone to log into your Gmail account and through that account all over the internet. But the owner doesn’t have the time or the wherewithal or the knowledge on how to do that themselves. I’m sure in time that will change but by the time the majority of business owners/marketers will be doing this the rules will have changed again. We may not even be using Google at that time.

I think Google is shooting themselves in the foot really. For SEOs the sale point use to be that placing an ad in the phonebook cost a ton and yielded little results. So having an optimized site and some off-page SEM would be cost effective and VERY profitable. Now however, it’s getting more expensive to market online and the phonebook is getting cheaper. Scary…

Looks like you are going to have a busy day replying to all comments but that’s a sign of great blogging right? Thanks for the inputs and the feedback. I’d never heard of you guys before today but consider me a subscriber from now on!

I think you have hit the nail on the head with social marketing. You can have multiple managers on Google Plus pages, but who in a small business is going to maintain it. It’s hardly a job for an external SEO consultant. They won’t know what we are doing day to day. I run training courses and I post photos of good sessions on our profiles (if I have the time). Most social media consultant will set up a page for you, but interacting every day. I can see how it could work.

This difficulty faking it is why I think it will be an increasingly important ranking signal.

Great post. I had to read all the way to the bottom in order to figure out why you kept showing pictures of blocks! I do agree, however, that it’s impossible to specialize in everything these days, and keeping the Jenga tower of SEO up requires blocks from many different specialists.

Thanks for taking so much time to put together a really interesting post.

As head of a very small internet marketing consultancy, only just able to start thinking about getting individual team members to specialise (there are only three of us so far), it is quite scary.

OMG, this is the biggest blog article ever. OK, I will print it in a book and I will read it!

As usual, this is all great stuff E! Especially appreciate your insights into PR and link building practices. A certain company we both know well needs to understand this 😉

NJ

Nate, Indeed. Congrats on the gig!

Sad… I started reading this, then stopped once I saw “alt tags”.

In reply to @Really? …Sad that someone who thinks they are so smart doesn’t understand the importance of alt tags in vertical image search, especially for an eCommerce website. Think about the way people shop for clothes. Google image search is little more than a giant window-shopping experience for many and alt tags are still an important piece of meta data since robots still aren’t very good at “seeing” an image.

Oh well… I always expect a few trolls to try and use an unfounded criticism of someone else’s hard work to make themselves sound smart when in fact they dont’ provide anything valuable to the community themselves and like to use fake names in their comments. Thank you for filling that role.