We published a guide to eCommerce catalog content pruning on Moz recently and had to leave a few things out for the sake of brevity, and to stay on-topic. For those of you about to embark on an eCommerce content audit, here are a few more things to consider.

Logical URL Structures FTW

Separating product pages and category pages from others is easy if the pattern is in the URL. Imagine a spreadsheet with 10,000 URLs in which everything is off the root.

www.domain.com/blue-widget

www.domain.com/green-widgets

www.domain.com/doohickey1

www.domain.com/stuff

…

Can you tell which two are product pages and which two are categories just by looking at the URL? Good. With four URLs it’s possible. With 4,000 or 40,000 it’s not a fun project.

Analyzing that site and making recommendations at scale would be a nightmare. In these situations, we typically request a database export of all product and category page URLs so we can do a VLookup and append “Product” or “Category” to those page types. Asking for database dumps from the client is time consuming and often met with groans from the development team or database admin.

eCommerce platforms are going to tell you that it’s best to have a “flat” site architecture. This is due to an incomplete understanding of what’s involved with SEO. Yes, it is probably true that pages closer to the root are given more PageRank from important pages like the home page, and that the fewer clicks it takes to get to a URL the better, typically. But this advice has been taken too far. It was meant for people who put important pages three, four, five… directories deep. It was meant for people who took previous advice wrong as well and ended up with URLs like this: www.domain.com/buy-blue-widgets/cheap-blue-widgets/Acme123-cheap-blue-widget.

A Better Way

Most of the common eCommerce platforms these days will put category pages in a folder/directory called “category” or “categories” and product pages in a directory called “product” or “products.”

For example:

www.domain.com/products/product-a

www.domain.com/category/widgets

www.domain.com/blog/category/widgets

www.domain.com/blog/post-1

www.domain.com/product/product-b\

A spreadsheet with thousands of URLs like the ones above would be much more manageable because I could sort and filter by page type using the URL structure.

Note: I wouldn’t ask a developer to completely rewrite the way the site handles product and category URLs unless it was a “home grown” eCommerce platform. Most of the big players are pretty set in their ways, and even when not optimal, they’re still pretty good. Also, I would not change URLs on an existing site unless there was a lot to gain. I do not consider “easier analysis” worth thousands of site-wide redirects and URL rewrites.

Let’s have a look at three very common platforms and how you might set them up from scratch, if given the option:

Shopify

I like the way Shopify URLs are set up out of the box. The problem is you get a lot of SEOs asking developers to bend over backward to remove /products/ and /collections/ and /pages/ from the URL structure. It’s possible. But why would you? To save one little directory? Earth to SEOs: Directories are there to provide a logical path for people and spiders to follow. Removing important directories from the URL is like removing taxonomy from your navigation and just throwing every category up on one drop-down, and then asking your shoppers to browse the entire site at random until they find what they’re looking for. Try that and see what happens to conversion rates.

Product URL: /products/product-name

Category URL: /collections/category-name

Content URL: /pages/page-name

Magento

This platform provides a few different options, including putting the entire category taxonomy within the product URLs (not recommended). I like to set it up on new sites so that category paths are not included in product URLs: System > Configuration > Catalog > Search Engine Optimizations —> Set “Use Categories Path for Product URLs” to “NO.”. Unfortunately, this means all products are off the root. I would prefer that they go into /products/ first, but this isn’t possible without significantly altering the way the platform handles URLs beyond what’s available in the “settings” menus.

Product URL: /product-name

Category URL: /category-1/category-2

Content URL: (Use Wordpress /blog/post-name or /articles/post-name, etc.)

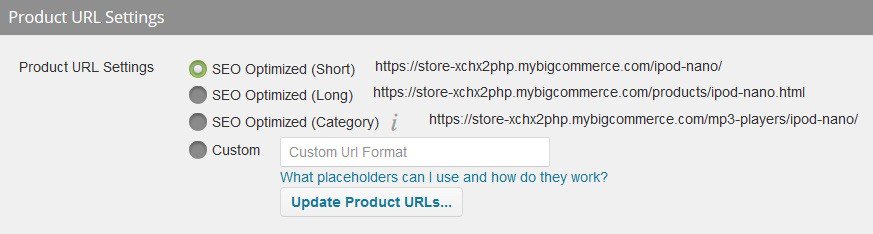

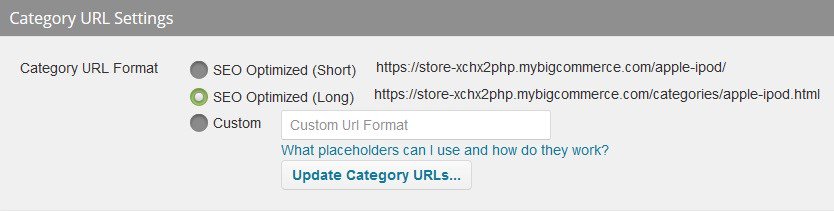

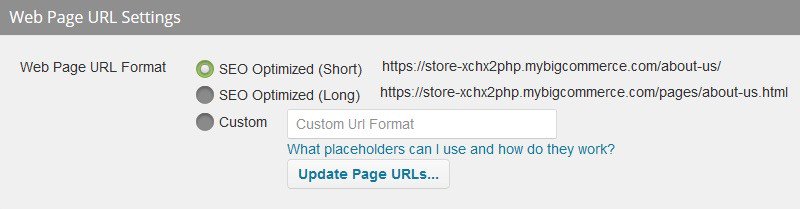

Bigcommerce

Every eCommerce platform has its pros and cons. For what it’s worth, I’m a fan of what Bigcommerce has done with regard to URL structure. They offer several options out of the box but go further to make “custom URL formats” easy to create.

Product URL: /products/product-name/

* Note: This is a custom URL format. Unlike the “SEO Optimized (Short)” option it does not include the category structure in product URLs. However, unlike the “SEO Optimized (Long)” version it does not include .html at the end. To achieve this, simply put /products/%productname%/ in the custom URL field.

Category URL: /categories/cat-1/cat-2/category-name/

*Note: This is a custom URL format. You would put /%parent%/%categoryname%/ into the field shown below.

Content URL: /pages/page-name/

*Note: This is a custom URL format. You would put /pages/%pagename%/ into the field shown below.

Brand Pages as Facets/Filters

Being able to apply filters to catalog results (categories or search) is very helpful for customers, but can be a problem for SEOs. If you have a product line in which “brands” are important keyword searches (e.g. Breitling Watches, Tag Heuer Watches, etc.) then each brand should have its own indexable landing page. For example, here’s the Breitling page on JomaShop.com. Someone searching on Google for “Breitling watches” or “mens Breitling watches” might arrive on this page and be happy with what they found.

Contrast that with the Men’s Watches category on SamsClub.com. Shoppers have the ability to filter on the left site in order to see only Invicta brand watches. However, this URL “Rel = Canonicals” back to the main Men’s Watches category. There are no brand landing pages for “Invicta Watches” or “Men’s Invicta Watches” on the Sam’s Club website.

Finding Links That Are Broken or Provide a Poor UX

Finding internal and external links to product pages that no longer exist, or that are permanently out of stock (and removed from the main category navigation) is an important part of doing an eCommerce content audit. Here’s a good how-to guide for checking for broken links with Screaming Frog.

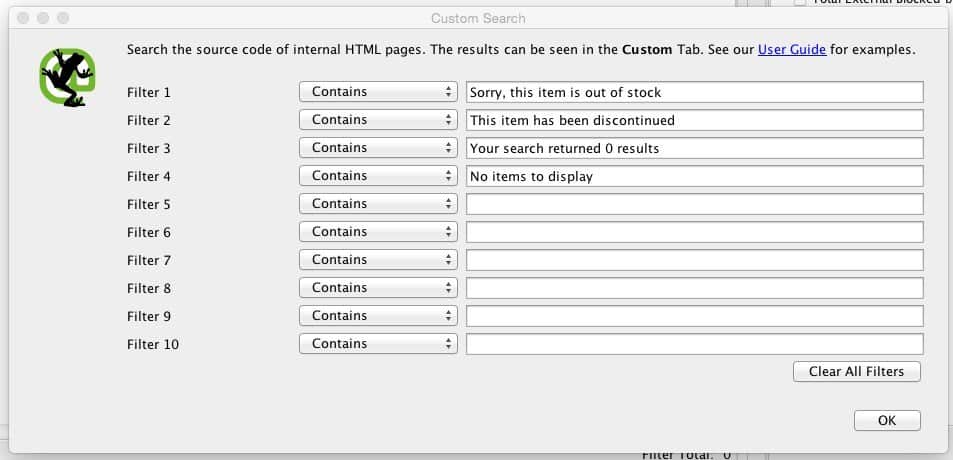

Don’t forget to look for “soft 404s.” This includes product pages that have been removed from the navigation, but not the site. The problem is they return a 200 status code, which means they’re not going to appear on your radar unless/until you start looking at metrics like traffic and sales further on in the auditing process. The solution is to copy whatever message is given (e.g. “has been discontinued” or “no longer in stock”) and look for URLs with that snippet using Screaming Frog’s custom search configuration (not to be confused with “custom extraction,” discussed below).

Scraping Your eCommerce Site for Content Audits

When performing an eCommerce content audit, you may want specific category page content and product page excerpts. For example, you may want to analyze content blocks for length, originality and more. Our current “go to” tool for crawling websites is Screaming Frog (though we’re looking into Botify). Screaming Frog’s “extraction” feature has made grabbing specific snippets of content for later analysis possible by using CSSPath, XPath, and RegEx to scrape/extract specific pieces of content. Learn more in this post.

Watch out for these sticking points!

#1 Data Collection

The first sticking point comes when trying to set everything up and pull in data from the various sources.

The first sticking point comes when trying to set everything up and pull in data from the website itself, as well as Google Analytics, Google Search Console, Moz, Magestic, Copyscape and more. This how-to guide on Moz should help, but it is based on how we did things in 2014. Things have changed, and your processes might be different, so the best advice we can give here is to document what you do so you can get it down to a process that interns and marketing coordinators can systematically follow. The strategist can be called in when problems arise, such as uncrawlable websites and 100,000+ SKU catalogs. Just remember what you’re trying to do here: Inventory every URL and see related metrics for each of them, including HTTP status codes, traffic, sales, links and social shares, so you can begin to analyze the situation and make recommendations. It may take some spreadsheet wizardry to connect all of the data points to their corresponding URLs, but there are usually a few Excel wizards hanging around most offices.

#2 Deer in Headlights

Another sticking point comes when all of the data has been collected, and it’s time to start analyzing and making informed decisions that could result in massive changes to the site. Deer in headlights. We’ve been there. Either you don’t know where to start, or are afraid to pull the trigger (or can’t convince the powers that be to do so).

We have created a handy Pocket Guide to Content Audit Strategies that might help you decide where to go from here. It may help to just start on one thing and go from there, much the same way a deer will finally get away from headlights by taking that first step.

For example, you could start by finding all URLs with parameters in the URL (e.g. ?sort=price) to determine if they even need to be in the inventory. Hint: If they all canonicalize back to the parent page, they probably don’t need to be in there because, technically, they’re not indexable on their own. Keep your analysis to indexable URLs. Other issues, such as goofed up rel = canonical tags, are best covered in a technical SEO audit, which we tend to run congruently with content audits because of the added efficiencies and closely related issues.

Or you could start by looking at rankings, traffic, links, sales – metrics that help you determine whether anyone knows the page exists in the first place to help you find huge chunks of content that don’t add any/much value to the website as a whole.

HUGE eCommerce Sites

Enterprise eCommerce sites with tens-of-thousands of SKUs are always tough. Not only are they a pain to crawl, but site owners rarely have the stomach to do the kind of pruning you are likely to recommend. This means getting data on those URLs is even more important to prove your case.

Crawling Big Sites

The best thing you can do from the beginning is to limit the crawler to indexable pages. This means any page that Google/Bing will not index (e.g. blocked by robots.txt, canonicalizing to the root, robots noindex meta tags, redirecting URLs, etc.) should be ignored. On small sites, we may allow some of these (particularly redirecting URLs) to be crawled and pulled into the content audit, but on larger sites these things may be best handled as part of a technical SEO audit.

I usually start the crawl, see some URLs I don’t want crawled, adjust filters and settings in Screaming Frog, then start the crawl over again. This might happen several times before the crawler is working efficiently. Always make notes of the filters you have to create to crawl the site, as these can be used to make technical SEO recommendations, like blocking certain page types via robots.txt or canonicalizing variant URLs.

Also, consider switching crawlers. Screaming Frog is great, but isn’t always the best option for extremely large websites. Try Botify and DeepCrawl as alternatives.

See our post titled Do You Really Need to Crawl All 3,435,198 Pages? for more tips on how to crawl enterprise eCommerce sites.

Copywriting for Thousands of Pages

The rate at which pages are improved/rewritten depends largely on the client’s budget. The sooner you get them an estimate of how many pages need work, the sooner they can find the money to do it. The more budget, the faster the project will go. Even if they need to rewrite 1,000 product pages and only have the budget to do 10 per week, an eCommerce content audit will help you prioritize so you’re always working on the most important pages first. You’ll have it all done eventually (~ 2 years) and will be seeing consistent traffic/sales improvements along the way that could help you get more budget to expedite the project.

Waking the Dragon

Any major, site-wide change will typically result in “waking the dragon,” a term our Sr. Inbound Strategist Dan Kern uses to explain the voracious appetite Googlebot will have for a site after migrations, mass-scale redirects, and similar changes.

This can be good and bad, but mostly good, for enterprise eCommerce sites with hundreds-of-thousands of pages. All of those robots noindex meta tags you added two months ago might finally get recrawled. On the other hand, if over crawling is a problem, you may want to combine several implementations into one release (e.g. Block internal search results, noindex image gallery page types, and canonicalize category page filters and sorting URLs) so the hungry Googlebot will only have to rampage through the site once to find them all.

More Tips?

There are countless little tips and tricks to getting a huge site inventoried, analyzed and updated when performing a content audit. What are your favorites?

0 Comments